Amortized Inference

backpropagation

The fundamental algorithm for training artificial neural networks by efficiently calculating gradients of the loss function with respect to network weights using the chain rule.

You can see a fully-derived backpropogation example on both a sigmoid and softmax-output neural network here.

Beam-search decoding

Chain Parsing (CSP Topology)

The real-time translation of Unix shell operators (|, &&, ||, ;) into a simplified, linear architecture of Communicating Sequential Processes (CSP). Rather than the LLM orchestrating multiple independent function calls, Chain Parsing allows the agent to construct an on-the-fly execution graph within a single string constraint. This relies heavily on the Rule of Composition, passing data seamlessly from one process to the next.

See [1], [2] and [3] for more resources on the formalization of the subject.

chunking (rag)

https://old.reddit.com/r/Rag/comments/1r47duk/we_benchmarked_7_chunking_strategies_most_best/

Direct Pixel Optimization

We freeze a model’s weights like a ResNet10 classifier, feed it a low-resolution image, set input_batch.requires_grad = True, and define a structural loss function, we can optimize the image directly:

Deep Image Prior [1] for image restoration tasks. However, there is another more optimal modeling for such tasks called Parametric Feedforward Networks.

Discriminative Region Problem

When a standard classifier ends up only locating the most distinguishing features in order to output a confident classification, omitting other important traits.

Dynamic Masking Augmentation Techniques

A limitation of the Park et al. formalization is that dynamic masking techniques, such as PuzzleMix [1] and AutoMix [2] cannot be formally explained by their framework, as their theorem relies on the mask being stochastic and independent of pixel values. When we add pixel-level awareness, we can no longer approximate loss function as a straightforward input gradient.

Empirically, though they achieve state-of-the-art results against MixUp and CutMix in selected setups, the overall impact on head class prediction is small. These techniques also take signficantly more compute per batch than stochastic techniques.

Exponential Moving Average

After every single training step, we update the shadow model by taking a massive fraction of its past self, and adding a tiny fraction of the new active weights:

F1 score

Harmonic mean of Precision and Recall. We use it when we want a single metric that balances both, especially when we have an uneven class distribution.

False Positive Rate (FPR)

Generative Image Augmentation

Earlier work has shown that using diffusion models to synthesize and augment data improves classification results on ImageNet [1], [2]. Given the latest developments in image generation models and abilities, these have moved to more sophisticated enhancements, such as background diversification, and saliency-aware generation pipelines [3], [4], [5]. Generally, these techniques isolate the ground-truth subject via segmentation or feature-extraction, and use the generative model as as an augmenter build the surrounding context and handle the blending.

Greedy Decoding

ImageNet

ImageNet [1] is a large-scale visual dataset designed for object recognition research. It became foundational to modern computer vision, especially after the breakthrough of University of Toronto’s team in the 2012 ImageNet Large Scale Visual Recognition Challenge using AlexNet [2]. The original dataset includes 14 million images and 21,841 synsets (classes), though teams often evaluate on the ILSVRC Subset benchmark [3] consisting of 1.2 million training images and 1,000 classes.

The original ImageNet images were not exhaustively labeled. An image labeled “dog” might also contain trees, cars, or people. It is single-label per image. ILSVRC was essential for adding stricter quality control, as well as bounding box and object localization for more nuanced identification.

Inception-v3

Inception-v3 is a 48-layer deep convolutional neural network (CNN) for image classification, developed by Google in 2016 to improve accuracy while reducing computational cost.

Introduced label smoothing.

Jetson AGX Orin

Hardware from Nvidia.

L1 Regularization (Lasso)

A method of penalizing weights proportional to their L1 norm, the sum of their absolute values (Manhattan Distance). Pushes weights to zero, encourages sparsity.

Where:

- : The final objective function to be minimized.

- : The original loss function (e.g., MSE, Cross-Entropy) measuring the error on the training data.

- (Lambda): The regularization strength hyperparameter. Controls the trade-off between fitting the data and keeping weights small.

- : The L1 norm of the weight vector.

L2 Regularization (Ridge)

A method of penalizing weights proportional to their L1 norm, the sum of their absolute values (Manhattan Distance). Pushes weights to zero, encourages sparsity.

Where:

- : The final objective function to be minimized.

- : The original loss function (e.g., MSE, Cross-Entropy) measuring the error on the training data.

- (Lambda): The regularization strength hyperparameter. Controls the trade-off between fitting the data and keeping weights small.

- : The L1 norm of the weight vector.

Linear Attention

Faithful transformers implement softmax on attention layers. This introduces quadratic complexity, and there are modern techniques to approximate it and reduce complexity to linear time.

[1]

Linear Scaling Law

Batchsize versus learning rate dynamics[1]:

If we multiply the batch size by a factor of , we must also multiply the learning rate by :

Mixed-Sample Data Augmentation

In 2022, Park et al. provided a unified formal treatment of mixed-sample data augmentation methods (MSDA) [1], demonstrating that such techniques were equivalent to designing a spatial decay kernel for gradient regularization, including CutMix [2] and MixUp [3]. Each technique posseses their own strengths and limitations. For example, CutMix may introduce multi-label noise, erasing a class that only existed in the cutout area, or introduces a new class that isn’t actually present in the pasted patch. There are some further static enhancements on this techniques, such as Fourier Mix (FMix)[4], which produces smooth and continuous patches instead of sharp ones, and ResizeMix [5], which resizes the patching image instead of cropping.

The Park et al. formalization demonstrates how MSDA reshapes the loss landscape, mathematically forcing it to learn smoother functions by penalizing erratic changes in its input gradients, weighted by how close the data points are (spatial decay). The core of the theorem relies on the stochastic nature of applicable image augmentation techniques. They used their theorems to formalize Hybrid Mix (HMix) and Gaussian Mix (GMix), intermediate spatial regularization techniques to sit between CutMix and MixUp distributions.

MSDA Regularization

Mixup blends images globally across all pixels, creating semi-transparent ghost images, hampering structural awareness. MixUp-CAM [1] is an introduced technique to use formalize uncertainty regularization constraints:

This formula combines a classification loss, class-wise entropy regularization, and spatial concentration loss to optimize the MixUp procedure, and prevent the response from being too divergent.

CutMix slready enforces spatial formalization through its geometry, such as feature exploration and spatial integrity. There is some research into techniques like semantic proportioning, making the augmentation differentiable, and using dynamic view-scales crops [2], [3], [4] to incrementally improve augmentations. These gains are incremental and selected, whereas the original CutMix usually suffices and is the common benchmark.

N-Gram Modeling

Language modeling has evolved from discrete statistical counting to continuous neural representation. Statistical n-gram models, first demonstrated by Claude Shannon in 1948 [1], estimate word probabilities based on raw frequency counts observed in a training corpus. These models inherently suffer from the data sparsity, in which sequences not seen during training receive a zero probability. We address this by applying Add-k smoothing, an technique used to reassigns probability mass to unseen events to ensure the model remains functional.

In 2003, Neural Probabilistic Language Models were introduced by Bengio et al [2]. They replaced discrete counts with word embeddings, allowing neural models solve sparsity through interpolation within embedding space, mapping similar vector coordinates together. This allows for generalization beyond the specific sequences encountered during training.

Nucleus Sampling

Parametric Feedforward Networks

We feed models a low-resolution input , and it outputs a high-resolution prediction :

During training, we calculate the loss between our prediction and the ground-truth high-res image . We backpropagate to update the weights, not pixels:

Perceptual Loss

Perceptual loss for super resolution:[1]

We extract the activation maps from deep inside the frozen network (e.g., the output of layer3) and compute the loss between the feature representations, not the pixels:

Poisson Distribution

A discrete probability distribution that expresses the probability of a given number of events occurring in a fixed interval of time or space if these events occur with a known constant mean rate and independently of the time since the last event.

The probability of observing exactly events is given by the formula:

Where:

- : The actual number of events we are interested in.

- : The average number of events per interval (the rate parameter).

- : Euler’s number (approximately 2.71828).

- : The factorial of .

Post-Training

Post training [1]

precision (metric)

You’re fishing with a net. You use a wide net, and catch 80 of 100 total fish in a lake. That’s 80% recall. But you also get 80 rocks in your net. That means 50% precision, half of the net’s contents is junk. You could use a smaller net and target one pocket of the lake where there are lots of fish and no rocks, but you might only get 20 of the fish in order to get 0 rocks. That is 20% recall and 100% precision.

Prompt Engineering

Abstract

In many real-world applications, Large Language Models (LLMs) are used to reason over structured tabular data rather than plain text. Examples include risk assessment, customer churn prediction, and medical triage systems. We investigate whether advanced prompt engineering strategies improve LLM performance when making predictions from structured data. We use LLMs for a tabular reasoning task, design and compare prompt engineering techniques, evaluate predictions using classification metrics, and analyze where LLM reasoning fails. Full code implementation and data is available on github.

Prompting Techniques

We have documented all prompt techniques used:

- System-prompt: Sample available Appendix \ref{sec:titanic-system}

- Zero-shot: Sample available Appendix \ref{sec:titanic-zero-shot}

- 5-shot: Sample available Appendix \ref{sec:titanic-5-shot}

- Chain-of-Thought (CoT): Sample available Appendix \ref{sec:titanic-cot}

- Self-consistency: Sample available Appendix \ref{sec:titanic-self-consistency}

- Tree-of-Thought: Sample available Appendix \ref{sec:titanic-tot}

Results

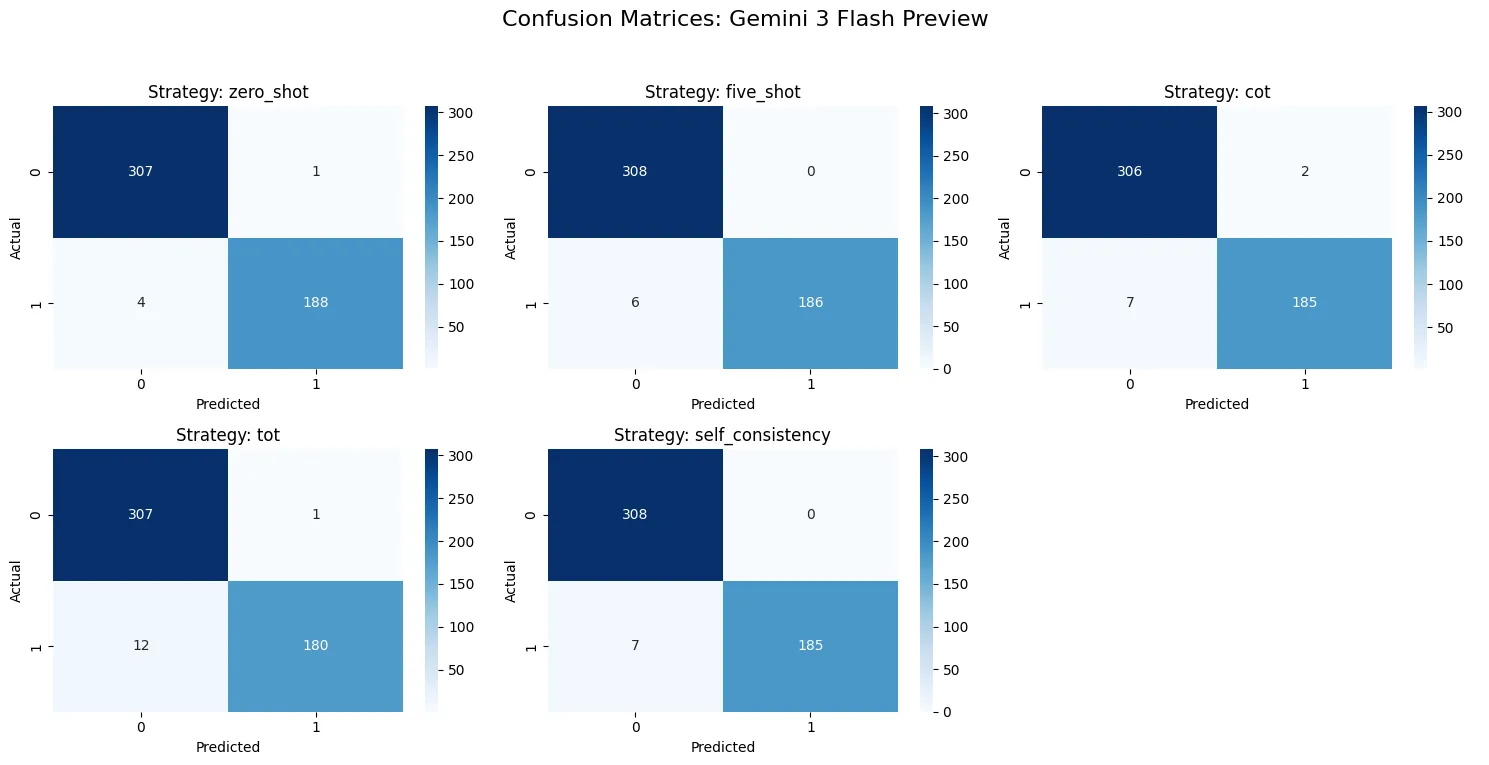

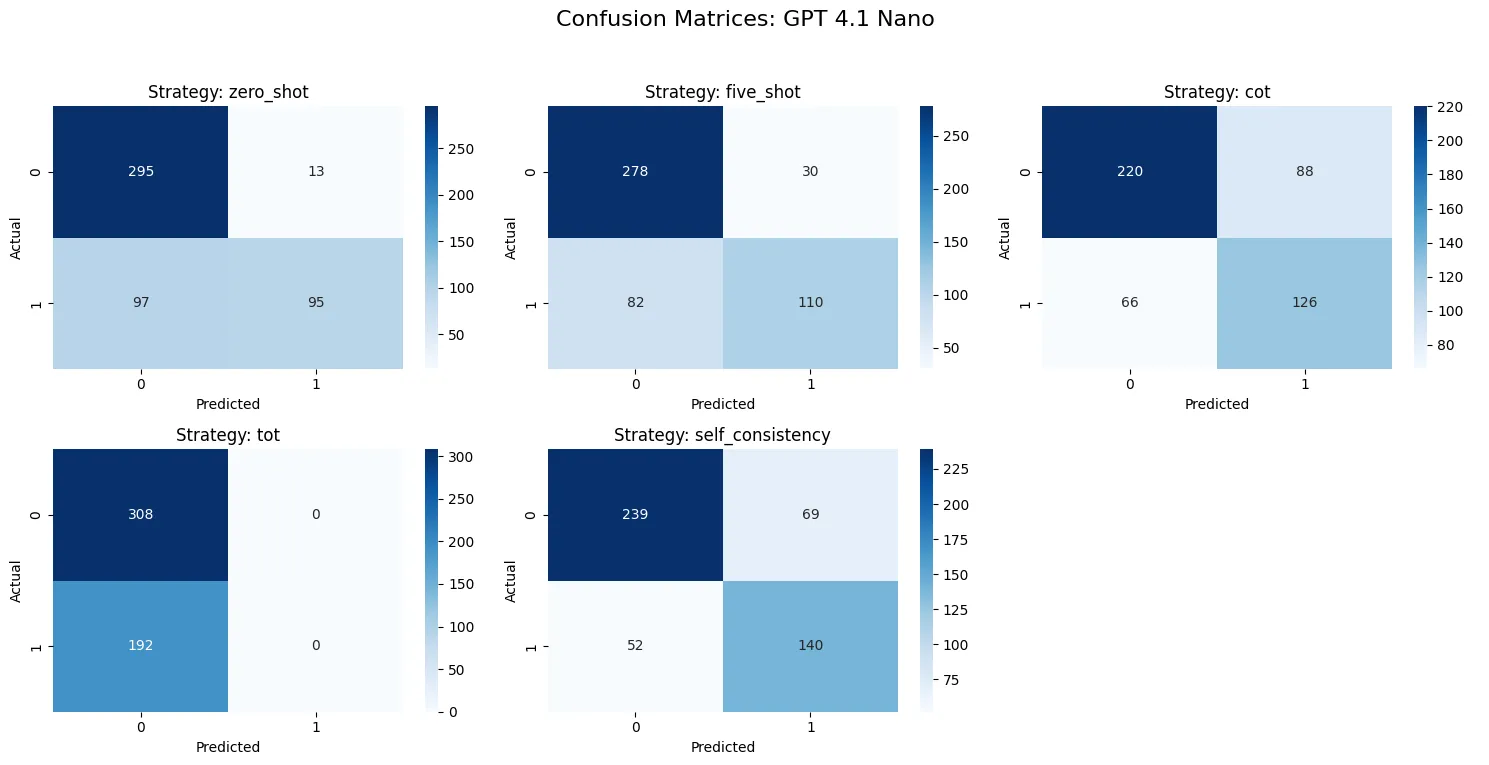

Table: Results Table [!h]

| LLM | Prompting Method | Accuracy | Precision | Recall | F1 |

|---|---|---|---|---|---|

| google/gemini-3-flash-preview | Zero-shot | 0.990 | 0.994709 | 0.979167 | 0.986877 |

| google/gemini-3-flash-preview | Five-shot | 0.988 | 1.000000 | 0.968750 | 0.984127 |

| google/gemini-3-flash-preview | CoT | 0.982 | 0.989305 | 0.963542 | 0.976253 |

| google/gemini-3-flash-preview | Self-consistency (3) | 0.986 | 1.000000 | 0.963542 | 0.981432 |

| google/gemini-3-flash-preview | ToT | 0.974 | 0.994475 | 0.937500 | 0.965147 |

| openai/gpt-4.1-nano | Zero-shot | 0.780 | 0.879630 | 0.494792 | 0.633333 |

| openai/gpt-4.1-nano | Five-shot | 0.776 | 0.785714 | 0.572917 | 0.662651 |

| openai/gpt-4.1-nano | CoT | 0.692 | 0.588785 | 0.656250 | 0.620690 |

| openai/gpt-4.1-nano | Self-consistency (3) | 0.758 | 0.669856 | 0.729167 | 0.698254 |

| openai/gpt-4.1-nano | ToT | 0.616 | 0.000000 | 0.000000 | 0.000000 |

: Full comparison results table comparing all prompting techniques.

Hard Case Analysis

gemini-3-flash-preview

Full reasoning traces for 9 missclassified samples for Gemini 3 Flash are at Appendix \ref{sec:titanic-results-gemini}.

The model performed slightly worse when prompted for greater reasoning, overthinking on our system information. The model primarily missed 3rd-class males of whom survived, passengers of ID 82, 205, 268, 745 and 401. Passenger 808, an 18-year-old 3rd class female, was predicted to have survived, but did not, which was a statistical anomaly. The model primarily made these misclassifications due to particular statistical assumption priors. However, the mode explicitly hallucinates passenger ID 70 as a “documented historical anomaly” whom survived, though this is false.

The Gemini model is exceptionally more accurate, faster and at higher availability than other SOTA models. It’s likely it was pre-trained on this particular problem. The next highest achiever was openai/gpt-5.2-chat (missed logging it) at 85% accuracy using zero-shot. Because of this, I believe Gemini likely has easily-accessible parametric knowledge about this particular dataset, and that our particular prompts were able to elicit the particular pre-training configuration it was trained on.

gpt-4.1-nano

Full reasoning traces for 9 missclassified samples for GPT 4.1 Nano at Appendix \ref{sec:titanic-results-nano}.

Our system prompt elicited the model to consider anomalies in survival, and thus some reasoning responses directly contradicted known statistical knowledge about the event. For example, for passengers 55 and 140, higher-class males were predicted to have survived, despite them having greater statistical likelihood of dying. The model also came to underestimate 3rd-class women traveling alone. Overall, enhanced reasoning degraded model performance, where the shorter prompts likely engaged activations with more dependence on direct statistical assumptions.

recall (metric)

You’re fishing with a net. You use a wide net, and catch 80 of 100 total fish in a lake. That’s 80% recall. But you also get 80 rocks in your net. That means 50% precision, half of the net’s contents is junk. You could use a smaller net and target one pocket of the lake where there are lots of fish and no rocks, but you might only get 20 of the fish in order to get 0 rocks. That is 20% recall and 100% precision.

Also just the True Positive Rate (TPR).

Regularization

300 samples per class is small, making Stochastic Gradient Descent (SGD) inherently difficult to land reliably. The sample complexity is just too low for SGD to reliably find flat, generalizable minima. We have to find a regularization strategy that helps us mitigate our limitations and prevent a sharp minima (overfitting). This forces us into a situation in which we must squeeze out every state-of-the-art regularization technique we can do to make the data as meaningful as possible.

SEAM

It has been demonstrated that standard CAMs fail to cover entire objects as they are translation and transformation invariant[1]. The localization and segmentation we seek require equivariance, where masks must alongside augmentation. Yude Wang et al. introduced Self-supervised Equivariant Attention Mechanism (SEAM) and Equivariant Cross Regularization (ECR) loss, taking the original image, generating its CAM, and then apply a spatial transformation . is then applied on the input, we yield the second CAM, and enforce on the loss that these two pathways yield the exact same spatial tensor:

By adding this to the standard cross-entropy loss, we force the network to produce stable object localizations rather than peaked activations that shift wildly when the image is augmented.

In the original implementation by Yude Wang et al., rotation and translation failed to produce sufficient supervision, while rescaling added major results ( to ).

Skip Connections

In classical hidden layer backprop calculations, we focused heavily on calculating gradients to update our weights, solvings . To mathematically reach a weight matrix deep in the early layers of the network, the error signal from the loss function must first survive the journey through all the intermediate activations above it.

We multiply by the weight matrix and the activation derivative at every single layer. When is , or initialized weights are small, terms multiply together and decay exponentially towards zero, causing the vanishing gradient problem.

When we add skip connections [1] (See our ResNet10 classifier), we add the input as a residual:

represents the composite function of all operations. We take the input , pass it through our architecture , and add the result back to the original input.

During backpropagation, we want to pass the gradient of the loss back to . Applying the chain rule:

Now, let’s expand the local derivative based on our residual equation:

Substitute this back into the chain rule:

The first term, , represents the standard gradient flowing through the weights of the convolutional layers. In a deep network, this term might still vanish to zero.

The second term, , is same gradient from the layer above, passing completely through the + operator. Because the gradient is added rather than multiplied, the loss landscape becomes smoother.

Instead of forcing to learn a new representation of data, carries historical information forward. is now responsible for learning the residual needed to improve the current representation. If a particular layer decides it doesn’t need to learn anything new, the optimizer pushes the weights of toward zero. The output becomes .

Sparse mixture-of-expert models

Sparsely activated MoE Models.

The Single-Tool Hypothesis

An agent design paradigm stating that a unified string-composition interface outperforms a catalog of highly typed, discrete function calls. By collapsing function selection into command syntax, the framework shifts the LLM’s cognitive load away from schema mapping (context-switching) and toward natural language generation (CLI syntax), which is highly represented in its training data. A full report was developed by a backend lead at Manus describing the concept. Smolagents from Huggingface approaches the same idea with Python.

Top-K Sampling

WSOL

Weakly Supervised Object Localization (WSOL) is a task where we try to locate objects in an image using only image-level classification labels rather than detailed bounding boxes or masks.

WSSS

Weakly-Supervised Semantic Segmentation (WSSS) is a task where the data lacks pixel-level labels and masks, and the modeling must learn an alternative. Both of these domains apply to our problems, and there is significant literature available.